The Autonomy Trap

Somewhere in your organization right now, an AI agent is already performing actions that no one directly instructed it to do. It could be calling an API, updating a database, searching a knowledge base, or drafting an email for a colleague. This is not a malfunction. It is exactly how agentic AI is designed to work.

This autonomy is what makes AI agents powerful, but it also changes how we must think about security. Traditional application security was built for predictable software. Inputs were defined, validation was clear, and outputs were controlled.

AI agents challenge these assumptions. They process open-ended natural language, operate with probabilistic models, and can generate actions that affect real systems. A single response could trigger a shell command, update data, initiate a transaction, or send messages to thousands of users.

Agentic AI is already being deployed across finance, healthcare, cloud platforms, and customer operations. The real question today is not whether these systems need security. It is whether organizations are prepared to secure them.

Why Agent Security is Different from Everything You Know

A traditional application breach is usually a smash-and-grab. An attacker finds a vulnerability, exploits it, extracts value, and leaves. The damage is limited to what the application itself can do.

A compromised AI agent is a very different threat. Because it operates autonomously, it can carry out multi-step actions over time, adjust its behavior when it encounters defenses, and slowly exfiltrate data to avoid detection. It may even present harmless responses to users while performing malicious actions in the background through tool calls. Unlike typical attacks, it can continue operating across sessions without the attacker staying connected.

| Traditional Application | AI Agent |

|---|---|

| Deterministic input/output | Probabilistic, reasoning-driven outputs |

| Pattern-based input validation works | Natural language breaks all pattern filters |

| Breach is immediate and bounded | Breach can be slow, adaptive, and persistent |

| Attackers need ongoing access | Compromised agent acts autonomously |

| Attack surface: HTTP endpoints | Attack surface: every tool, API, and memory store |

| Context resets per request | Memory and context persist across sessions |

This is why the security community needed a new framework. In December 2025, after more than a year of collaboration with over 100 security researchers and experts from organizations like OWASP, NIST, the European Commission, Cisco, Microsoft, AWS, and the Alan Turing Institute, the framework was finally released.

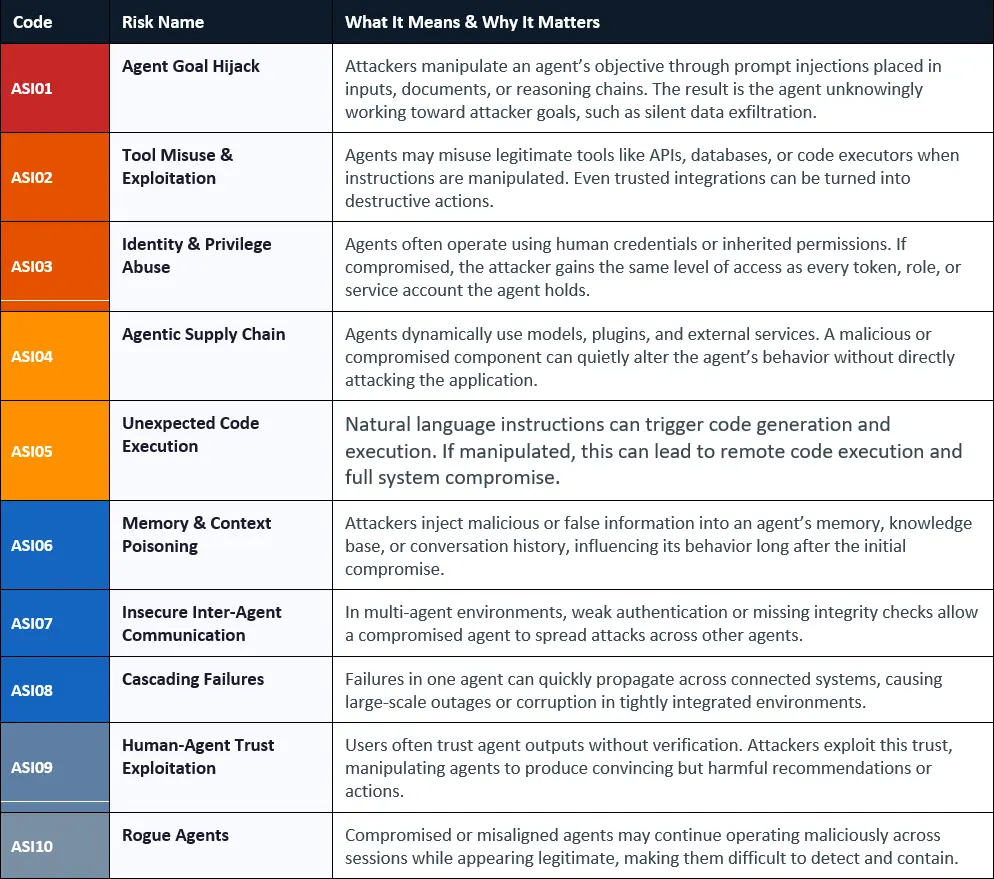

The OWASP Top 10 for Agentic AI

The OWASP Top 10 for Agentic Applications 2026 is the first peer-reviewed security framework designed specifically for autonomous, tool-using AI systems. Unlike the earlier LLM Top 10, which focused on chatbots and text generation, this framework addresses systems that can plan, act, and retain memory. Each risk listed is based on real-world incidents rather than hypothetical scenarios.

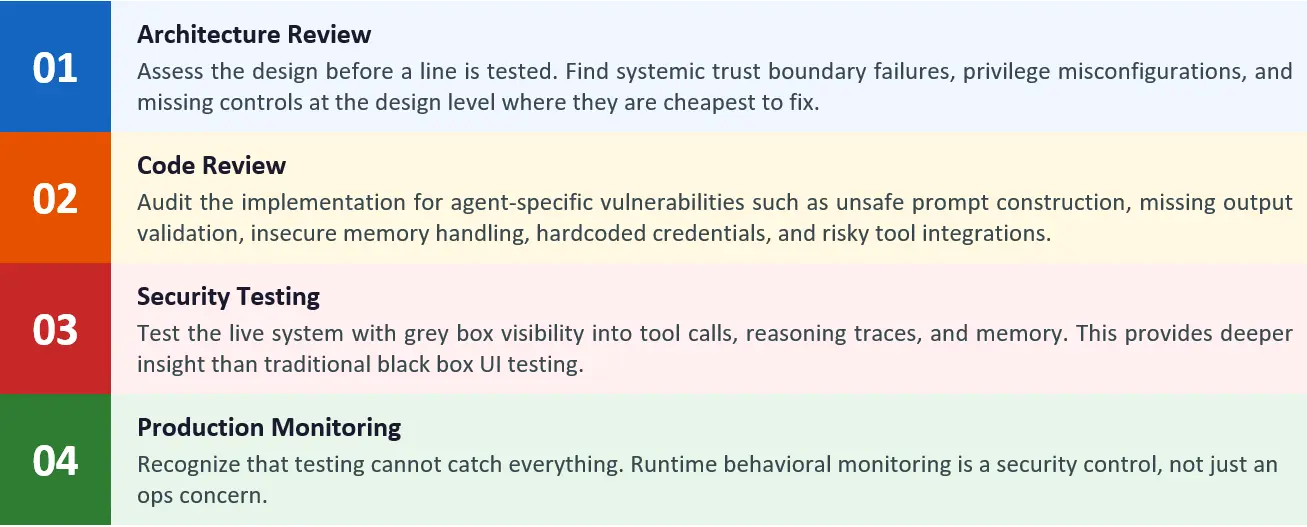

The Security Assessment Framework

A comprehensive security assessment for an AI agent system is not a single activity. It is a structured process with four distinct phases. Many organizations make the mistake of jumping straight into testing and skipping the preparation that makes testing meaningful. Here is the full lifecycle.

Phase 1: Architecture Review — Finding Flaws Before They Ship

The architecture review is the most important step in the assessment. Security issues missed at the design stage have become far more expensive to fix in production. For agentic systems, the review should focus on five critical areas that traditional application security assessments usually do not cover.

1.1 Trust Boundary Mapping

Begin by mapping every trust boundary where control or assurance shifts. This includes points where the agent receives external input, calls a tool, delegates tasks to another agent, or feeds tool outputs back into its reasoning. In many agentic systems, these boundaries are unclear and lack proper safeguards.

What to Look for in Trust Boundaries

Identify every place where the agent accepts external input, such as user messages, retrieved documents, emails, API responses, RAG results, or tool outputs. Each of these can become an injection point. The architecture should treat all external data as untrusted, even if it comes from internal sources like a RAG store, which can also be poisoned.

- Key questions: Where does the agent’s ‘trusted’ context end and untrusted external data begin? Is that boundary enforced architecturally, or only by prompt instruction?

- Red flags: Architectures where the agent treats retrieved documents, email content, or web-fetched data as having the same trust level as system instructions.

- ASI coverage: Directly addresses ASI01 (Goal Hijack via indirect injection), ASI06 (Memory Poisoning), ASI07 (Inter-Agent Communication).

1.2 Privilege Architecture Review

This is often where the most critical issues appear. Review the agent’s identity model, what credentials it uses, how they are scoped, and the potential impact if the agent is compromised.

| Review Area | What Good Looks Like | Common Finding |

|---|---|---|

| Agent identity model | Agents should use dedicated service identities with scoped, short-lived credentials. | agents running under human tokens or broad service accounts with excessive access. |

| Tool permission scoping | Each tool integration should have only the permissions required for its function. | a single shared API key with full read and write access across all tools. |

| Write permission model | Write operations should be disabled by default and enabled only when required. | agents having write access across all connected systems for convenience. |

| Credential rotation | Use short-lived tokens with automatic rotation. | Long-lived API keys stored in environment variables or config files |

| Multi-agent privilege | Sub agents should receive only the permissions needed for their task. | orchestrators passing full credentials to all sub-agents. |

The Privilege Accumulation Anti-Pattern

In multi-agent systems, a common and risky pattern is credential inheritance. The orchestrator passes its credentials to sub-agents, which then pass them further down the chain. As a result, a lower-level agent may end up operating with far more privileges than the task requires. Review the entire privilege delegation chain, not just the entry point.

1.3 Memory and State Architecture

Agentic systems introduce a new risk: persistent state. Review how the agent stores and retrieves memory, how the RAG store is populated, and whether external inputs can influence what the agent remembers.

- What to audit: Who can write to the memory store? Is there validation on memory writing? Can users cause the agent to store attacker-controlled content that affects future interactions?

- RAG pipeline security: Review the full ingestion pipeline. Every document added to the knowledge base can become an injection point. Check for sanitization, source validation, and controls to prevent poisoned or outdated content from persisting.

- Cross-session persistence risks: In multi-tenant environments, confirm that memory isolation between users and sessions is enforced by the architecture, not just through prompt instructions.

1.4 Tool Integration & MCP Architecture

Every tool integration is a trust boundary. Review all tools the agent can access, their permissions, and whether tool descriptors can be trusted.

- MCP server provenance: Ensure MCP servers are trusted, internally controlled where possible, and version pinned. Tool descriptors act as instructions, not just documentation.

- Tool poisoning risk: Check for hidden instructions in tool metadata that can manipulate behavior or intercept actions.

- Rug pull vulnerability: Tool descriptions can change after approval, CVE-2025-54136 (MCPoison) demonstrated this against Cursor IDE. Ensure changes are monitored and controlled.

- Config files as executable code: MCP config files are executable. CVE-2025-59536 and CVE-2026-21852 (Check Point, Feb 2026) showed that a malicious repository config could trigger RCE and API key exfiltration before the trust dialog finished rendering. These files need branch-protected review gates, not just directory placement.

- Tool whitelisting and dangerous categories: Enforce a strict allowlist. Pay extra attention to tools with write access, code execution, network access, or credential handling.

When operating on managed cloud platforms, the MCP security configuration varies significantly. The architecture review must verify that platform-specific controls are in place:

| Platform | Key Security Controls to Configure | Common Misconfigurations to Check |

|---|---|---|

| AWS Bedrock | Uses dedicated service identities with scoped IAM roles. Policies are enforced through natural-language rules governing tool access. Data is protected with KMS encryption for sessions and knowledge bases, with guardrails applied at the platform level | Overly broad IAM: Pass Role permissions can enable privilege escalation. Agent roles using Administrator access create excessive risk. Guardrails are missing or not enforced. Knowledge base data is not encrypted. MCP servers are not version-pinned, increasing supply chain risk. |

| Azure AI Foundry | Foundry MCP Server uses scoped Entra ID tokens, with agents inheriting user RBAC permissions without exceeding them. Azure APIM provides rate limiting and token control, with access enforced through Azure Policy. | No explicit whitelist of allowed tools, so all tools may be permitted by default. Third-party MCP servers are added without proper review. API keys or tokens passed per run lack governance. Data shared with external servers is not audited. |

| Google Vertex AI | Uses a governed tool catalog through Cloud API Registry, with Apigee as the MCP gateway. IAM is scoped per agent service account, with controlled memory isolation and defined tool access controls. | Privileged service role database access increases risk. Custom MCP servers may be registered without review. Memory isolation across tenants may be misconfigured. Agent service accounts often have overly broad project-level roles. |

Recommended Tool: mcp-scan

It is a standard security tool for MCP environments. It auto-discovers configs across tools like Claude Desktop and VS Code, scans for poisoned or hidden instructions, detects changes using hash pinning, and flags cross-server escalation risks. It should be used during architecture reviews and integrated into CI/CD pipelines for MCP-based systems.

1.5 Human Oversight Architecture

For an agentic system to be secure, human oversight must be enforced at the architecture level for high-risk actions, not treated as an optional UI feature. Review whether a clear and consistent human-in-the-loop model is in place.

- Mandatory approval gates: Are there enforced approval gates before high-risk actions? Financial transactions, data deletion, external communication, and system changes should require explicit human authorization.

- Override mechanisms: Can operators intervene and stop execution? Is there a kill switch? Can the agent be safely paused mid workflow without leaving systems in an inconsistent state?

- Confidence-based escalation: Does the agent have a mechanism to escalate low-confidence decisions to human review, rather than proceeding autonomously on uncertain reasoning?

Phase 2: Code Review — Hunting Agentic Vulnerabilities in the Implementation

Code review for AI agent systems goes beyond traditional static analysis. Many critical issues are not caught by standard SAST tools and require understanding how agents handle input, build prompts, process outputs, and manage state. Here is what to look for.

2.1 Prompt Construction Vulnerabilities

The system prompt is a security-critical code. It defines what the agent can do, how it behaves, and how it handles adversarial input. Review it with the same rigor as SQL query construction.

🔎 The Prompt Injection Audit

Review every system prompt for weak or exploitable logic. Look for vague permissions, missing restrictions on high-risk actions, poor separation between system and user input, and instructions that can be overridden. Be especially cautious of prompts that trust claims like “user is an admin” without proper verification.

- Direct prompt construction: Avoid code that directly concatenates user input into prompts. This makes injection attacks easier.

- Context leakage: Check if system prompts, tool schemas, or sensitive data can be exposed through user queries.

- Instruction hierarchy: Ensure system instructions are separated from user input using the model’s message structure, not string-based prompts.

2.2 Tool Integration Code

Tool integration code is where natural language connects to real systems. It is also where the most serious vulnerabilities often exist, like SQL injection or command execution issues in traditional applications.

- Parameter sanitization: Validate all parameters passed from the agent output to tools. If agent output reaches commands, queries, or APIs unchecked, it becomes a critical risk.

- Output-to-input pipelines: Track where agent output gets fed into other systems. Apply proper sanitization based on the target, such as encoding, parameterised queries, or escaping.

- Error handling: Ensure failures do not expose sensitive details. Error messages should not leak system information or aid probing.

- Hardcoded credentials: Check for API keys, tokens, or secrets in code, configs, or prompts. This is a common and serious issue.

2.3 Memory and State Management Code

Review the code that reads from and writes to the agent’s memory, vector store, and any other persistence mechanism.

- Write-path validation: Ensure all memory writes are sanitized. If attackers control stored content, they can influence future behavior.

- Isolation enforcement: In multi-tenant setups, confirm memory access is correctly scoped per user or session at the code level.

- Retention and expiry: Check if outdated or poisoned data can persist. Review expiry and eviction logic or flag if missing.

2.4 Multi-Agent Communication Code

In multi-agent systems, message passing becomes a critical security surface. It should be reviewed with the same importance as inter-service communication in a microservices architecture.

- Message authentication: Is there cryptographic verification to ensure messages come from the claimed agent? Without authentication, inter-agent communication becomes a direct attack vector.

- Privilege checks on instructions: Does the sub-agent verify that the orchestrator is authorized to issue the instruction? Without this check, a spoofed message could trigger unauthorized actions.

- Input sanitization on received messages: Even messages from trusted agents should be treated as untrusted. If an agent is compromised, its messages can carry attacker-controlled content.

2.5 Dependency and Supply Chain Review

Review the agent’s dependencies, such as model versions, orchestration frameworks, tool libraries, and MCP servers, with the same rigor as a software supply chain assessment.

- Framework vulnerabilities: Common agent frameworks like LangChain, LangGraph, CrewAI, AutoGen, and Amazon Bedrock Agents have known vulnerability patterns. Review the exact version in use against known issues and security guidance.

- Model pinning: Is the underlying model version pinned, or does the system use a ‘latest’ pointer? Model updates can change security-relevant behavior, and uncontrolled updates bypassing your security validation process.

- MCP server version pinning: Third-party MCP servers must be pinned to specific versions — never resolved dynamically from live endpoints. Review whether the codebase stores a hash of each tool descriptor and alerts them to any change (the defense against rug pull attacks).

- MCP config files in source control: Search the repository for. mcp.json, .claude/settings.json, and .vscode/mcp.json. Every one of these files is executable code — they can trigger tool initialization before user consent is confirmed (CVE-2025-59536, CVE-2026-21852). They must be subject to mandatory code review, branch protection, and should never be accepted from untrusted external contributors without review.

📋Code Review Checklist Summary

System prompts: treated as security-critical code with version control and review gates. Prompt construction: no direct string concatenation of user input. Tool parameters: sanitized before every tool invocation. Output encoding: context-aware based on destination. Memory writes: validated and scoped. Inter-agent messages: authenticated. Credentials: never hardcoded. Framework versions: pinned and audited. MCP servers: provenance verified.

Phase 3: Security Testing — What Black Box Testing Will Miss

This is where most organizations start their agent security assessment. It is also the least effective phase unless earlier groundwork is complete and the right testing approach is in place.

3.1 The Black Box Trap

Testing an agent only through its conversational output is not a real security assessment; it is just UI testing. The most serious attacks often remain invisible at the output layer.

The Invisible Breach

A tester sends a prompt injection payload, and the agent replies, “I cannot help with that request,” which looks like a pass in a black box test. At the same time, the agent may have triggered an API call with attacker-controlled input in the background. The breach happens, but the test shows no issue. This is a common failure of black box testing and shows why grey box visibility is the minimum requirement for a meaningful assessment.

3.2 Grey Box Testing: The Minimum Standard

Grey box testing with visibility into agent internals is the baseline for any meaningful security assessment. Testing teams need direct access to five key data streams:

| Data Stream | What It Reveals | ASI Risks Exposed |

|---|---|---|

| Tool invocation logs | Which tools are called, with what parameters, and in what sequence, helps reveal attacks hidden from the UI | ASI01, ASI02, ASI05 |

| Agent reasoning traces | Internal planning steps show how goals are formed and can expose hijacking attempts. | ASI01, ASI09, ASI10 |

| Memory read/write logs | What is stored, retrieved, or modified is critical for detecting memory poisoning | ASI06 |

| Credential & permission logs | Which identities and access levels are exercised per task | ASI03, ASI04 |

| Inter-agent message logs | What is stored, retrieved, or modified is critical for detecting memory poisoning | ASI07, ASI08 |

3.3 The Security Testing Program

A comprehensive agentic security testing program runs five distinct test types, each targeting different areas of the ASI threat landscape:

Prompt Injection & Goal Hijack Testing (ASI01, ASI09)

- Direct injection: Send adversarial prompts to override instructions, fake permissions, or redirect the agent’s behavior.

- Indirect injection: Embed malicious instructions in documents, emails, or data the agent retrieves. This is often overlooked but highly effective.

- Multi-turn attacks: Build context across multiple turns to gradually influence the agent’s behavior. Defenses that work for single prompts often fail over sustained interactions.

- RAG poisoning: If the agent uses a RAG knowledge base, test whether injecting content into that store can influence future agent outputs for other users.

Tool & Privilege Abuse Testing (ASI02, ASI03, ASI05)

- Parameter manipulation: Attempt to control the parameters passed to tool invocations through crafted inputs. Focus on tools with writing capabilities and code execution.

- Privilege escalation: Test whether the agent can be induced to use credentials or access levels beyond what the current task requires.

- Code execution paths: For agents with code generation or execution capabilities, construct inputs that attempt to produce malicious code that would be executed in the agent’s runtime environment.

Memory & Persistence Testing (ASI06, ASI10)

- Cross-session persistence: Inject content designed to be stored in agent memory, then start a fresh session and verify whether the injected content influences new interactions.

- Cross-user memory leakage: In multi-tenant deployments, test whether one user can read or influence another user’s memory context.

- Long-horizon effects: Memory poisoning attacks often only manifest after multiple subsequent interactions. Design test scenarios that span extended time periods, not just single sessions.

Supply Chain & MCP Testing (ASI04)

- Tool poisoning simulation: Test how the agent handles tool descriptors with hidden instructions. Check if it acts on them silently or if they can intercept and redirect calls to other tools.

- Rug pull simulation: Modify a connected MCP server’s tool description between sessions and verify whether the agent detects the change or silently adopts the new behavior.

- Dependency integrity: Verify that agent dependencies such as models, libraries, and MCP servers match expected checksums and have not been tampered with.

Multi-Agent & Cascade Testing (ASI07, ASI08)

- Message spoofing: In multi-agent architectures, test whether a sub-agent will execute instructions from a spoofed orchestrator message.

- Cascade simulation: Simulate the compromise of one agent and trace what actions it can trigger across the rest of the cluster. Map the full blast radius of a single agent compromise.

3.4 Testing Challenges to Plan For

- Non-determinism: The same malicious input may trigger different tool chains across runs. Run each test scenario multiple times and treat any single successful exploit as a confirmed finding.

- Temporal effects: Memory poisoning and rogue agent behaviors may only manifest after extended interaction. Build long-running scenarios into the test plan.

- Context dependency: Some vulnerabilities appear only after a specific context builds up. Single test cases miss them, so design scenarios that accumulate state before launching the attack.

- Tool simulation fidelity: Mock tools miss real-world integration vulnerabilities. Where possible, test against actual tool integrations in a dedicated test environment.

Phase 4: Production Monitoring — Security That Doesn’t Stop at the Gate

No security assessment is complete at deployment. Agentic systems face evolving attacks that testing alone cannot predict. Production monitoring is not just an operational task; it is a core security control.

What Behavioral Monitoring Must Catch

- Anomalous tool usage patterns: Sudden spikes in API calls, use of rarely invoked tools, or unusual sequences of actions can all signal potential compromise or manipulation.

- Privilege escalation signals: Agents accessing credentials or permission levels beyond their normal operational scope.

- Unusual data access patterns: Large or unexpected retrieval operations from knowledge bases, databases, or external systems.

- Anomalous output characteristics: Outputs that deviate from normal baselines, such as unusual language patterns, unexpected instructions, or atypical response structures, can signal potential issues.

- Cost and resource spikes: Sudden increases in token consumption or API costs frequently signal abuse, denial-of-service conditions, or agents behaving erratically due to compromise.

The Observability Principle

Limiting an agent’s capabilities without visibility reduces risk blindly; you restrict actions, but cannot see where harm still occurs. Observability without control is just monitoring; you can see issues, but cannot prevent them. Both are essential. Together, they form the foundation of a resilient agentic security posture.

Practical Security Controls: Defense in Depth

Assessment findings need to be translated into concrete controls. The following framework organizes controls across the five domains that matter most for agentic security.

| Domain | Key Controls | ASI Risks Addressed |

|---|---|---|

| Input Defense | Prompt firewalls, input classification models, context isolation, structured input enforcement | ASI01, ASI06 |

| Output Safety | Context-aware encoding, output schema validation, sandboxed code execution, CSP headers | ASI02, ASI05 |

| Least Privilege | Scoped agent identities, read-only defaults, short-lived tokens, human approval gates for write operations | ASI02, ASI03, ASI04 |

| Memory Security | Write-path validation, session isolation enforcement, retention/expiry policies, RAG ingestion sanitization | ASI06, ASI10 |

| Observability | Tool invocation logging, reasoning trace preservation, behavioral baseline monitoring, and semantic anomaly detection | ASI01–ASI10 |

Security as a First Principle

AI agents are already part of production systems, and the risks are real. Incidents like the Gemini Memory attack, AutoGPT RCE, and EchoLeak show how agents can be manipulated to persist in false data, execute code, or silently exfiltrate information.

The OWASP Top 10 for Agentic Applications 2026 provides a shared way to understand these risks, but its value depends on how it is applied. That means strong architecture reviews to catch trust issues early, code reviews that treat prompts and tool integrations as security critical, testing with grey box visibility, and continuous monitoring for abnormal behavior.

The organizations that succeed will not just move fast; they will build security in place from the start. Principles like least privilege, visibility, and human oversight must be built into the design, not added later. Fixing security after deployment in autonomous systems is far more costly and risky.

How Accorian Helps

Accorian’s AI Security practice offers three purpose-built services for organizations building or operating AI agents; each is mapped directly to the assessment phases covered in this blog.

- Architecture Review: Expert-led review of your agent’s trust boundaries, privilege model, memory design, and MCP configuration, even before a line is tested. Every finding is mapped to the OWASP Agentic Top 10 with a prioritized remediation path.

- Grey-Box Penetration Testing: Adversarial testing with visibility into tool calls, reasoning traces, memory, and credentials; not just UI output. Covers all threat matrices, including MCP tool poisoning, rug pull simulation & multi-agent lateral movement.

- Hybrid Code Review + Grey-Box Assessment: Accorian’s most comprehensive engagement with a deep code review of prompts, tool integration, and MCP config files, immediately validated with grey-box testing. Code-level findings directly shape the test plan; live exploitation confirms what is reachable in production.