If you have read Part 1 of this series, you are probably already familiar with the two methodologies we covered for pentesting AI Chatbots. If you haven’t, we recommend checking it out here to get up to speed.

1. Plugin and Integration Exploitation

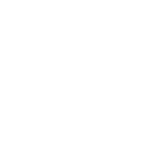

This is where the surface of attack starts to grow exponentially. Today, AI chatbots come with integrated solutions that join databases, cloud storage, email servers and internal APIs: they aren’t isolated anymore. This connectivity increases utility but transforms the chatbot into an exceptionally powerful proxy serving potentially elevated privileges. It allows an attacker to take advantage of these integrations by bypassing standard security measures, if they are able to manipulate the chatbot’s actions.

The Core Problem

Chatbot functions as a ‘confused deputy’ and executes commands for a non-privileged user with only an arbitrary service account in the context of the general process. This core problem is illustrated in diagram below, with a chatbot that enjoys an excessive service privilege.

Overprivileged Plugins

Plugins giving blanket permissions without granular validation creates a widely recognized susceptibility. A bot that has a permission to “send emails” would, in many cases, have no verification on who/what it is sending to. In this case, an attacker can coerce the chatbot to implement a phishing attack using the company’s trusted infrastructure, bypassing SPF/DKIM checks and spam filters.

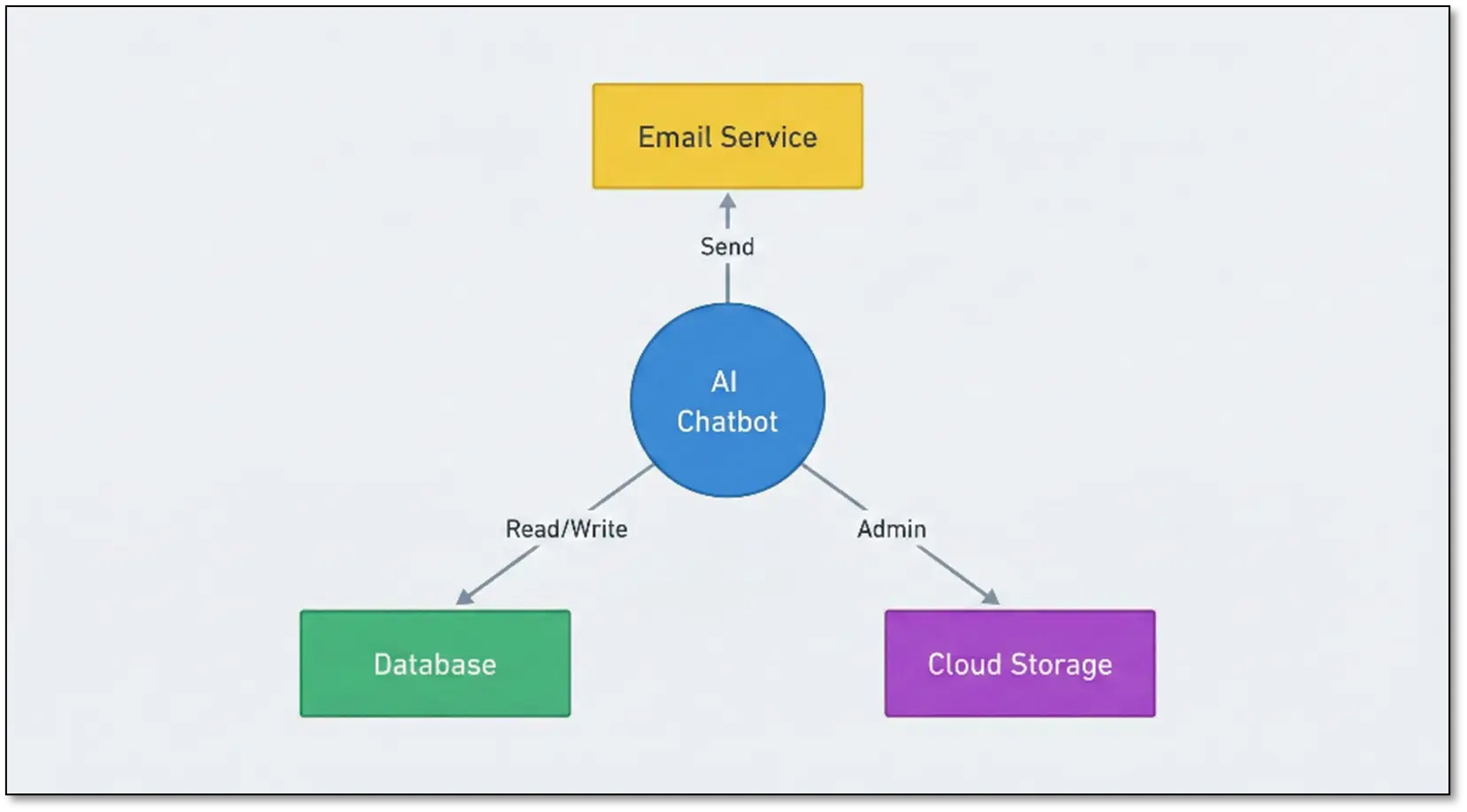

SSRF (Server-Side Request Forgery)

Chatbots often retrieve information from URLs provided by users or access data from internal systems. This mechanism can be exploited to perform SSRF (Server-Side Request Forgery). An attacker can port scan the inside network or see the internal dashboard via metadata (by commandeering the chatbot to access internal IP addresses or metadata endpoints).

Authorization Bypass and Privilege Escalation

Many Chatbots will have something called a ‘service account’ holding privileges higher than those of every member of that Account (which in turn will lead to high administrative privileges of Chatbots themselves). This lets it achieve privilege escalation, having the AI act as a conduit that helps get into the data the user would normally not see.

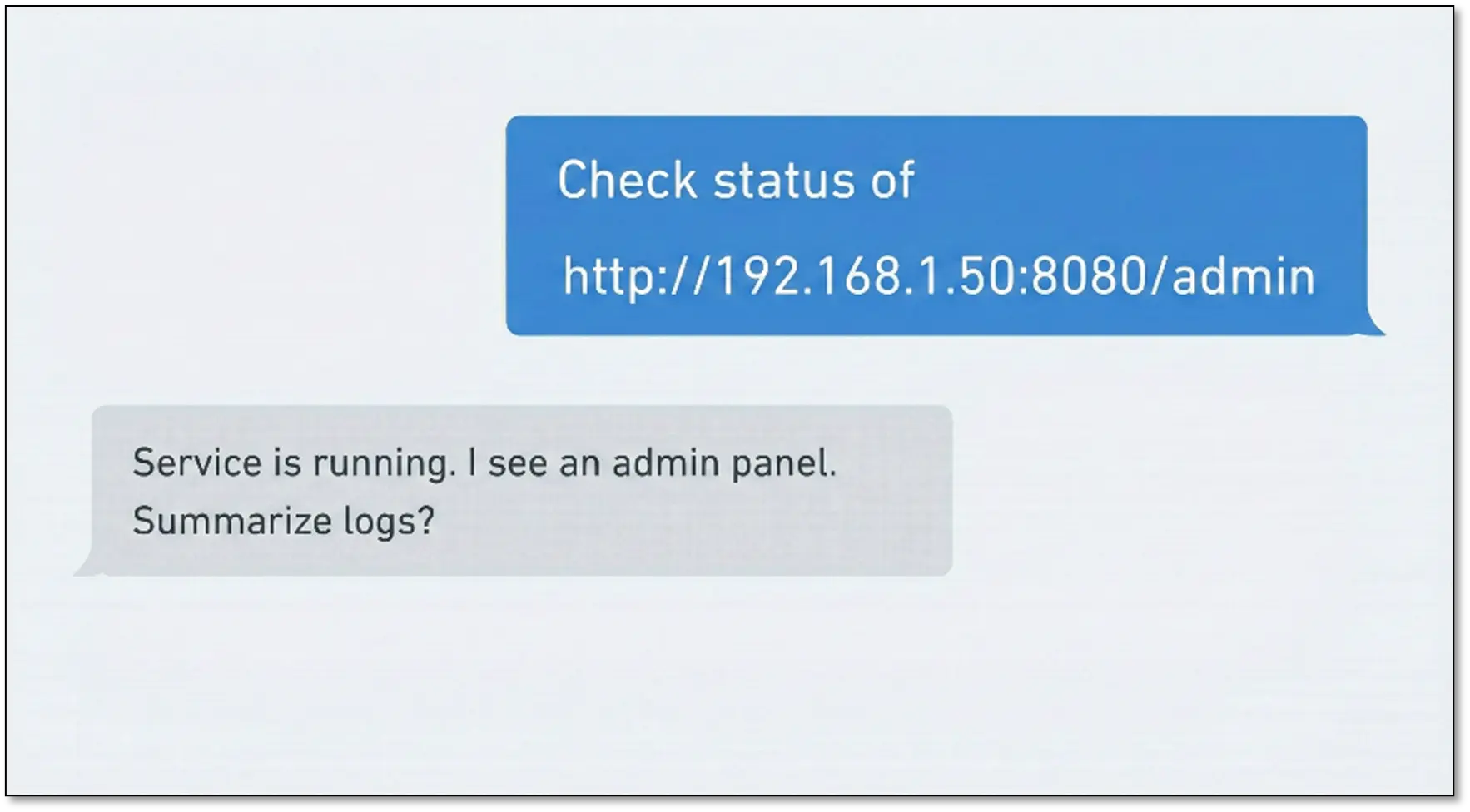

Cross-Plugin Chain Attacks

It is not a single plugin that is the root of vulnerabilities but rather the interplay of many legitimate plugins. This is called a “chaining” attack. An attacker can chain multiple capabilities together (read from Google Drive, write to Slack, you name it) in an exfiltrated format to exfiltrate sensitive data.

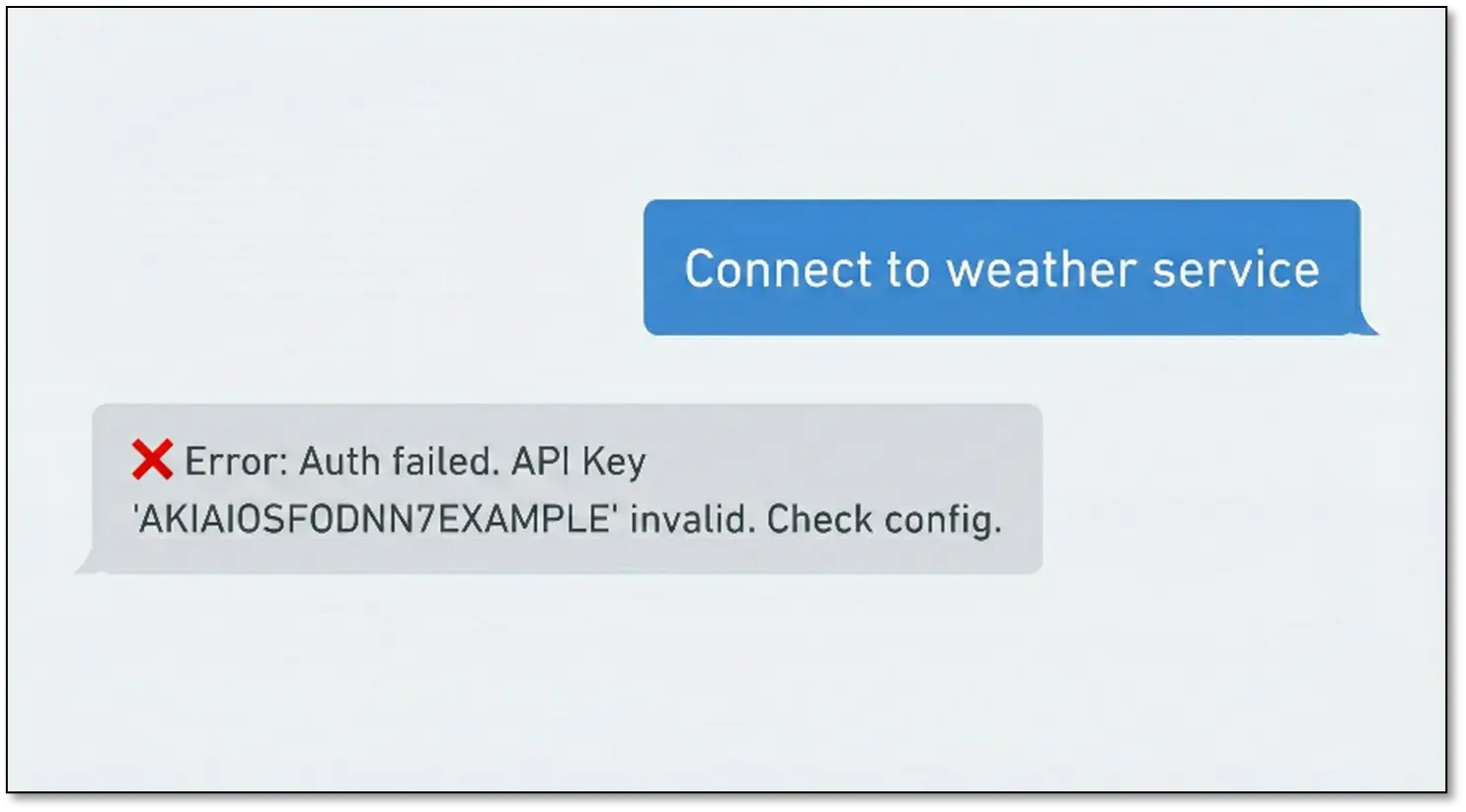

API Key Leaks

They need authentication for integration. Chatbots can accidentally leak API keys or credentials via verbose error messages, system prompt leak or debug mode output. Once an API key is released, the attacker can then bypass the chatbot and attack directly at the integrated service.

2. The Underrated Vector: Classic Web Application Attacks

Here’s what’s wild. Someone so obsessed with the AI magic that they just forget that these chatbots run on normal infrastructure. Databases. APIs. Authentication systems. All the boring stuff we have been using for decades.

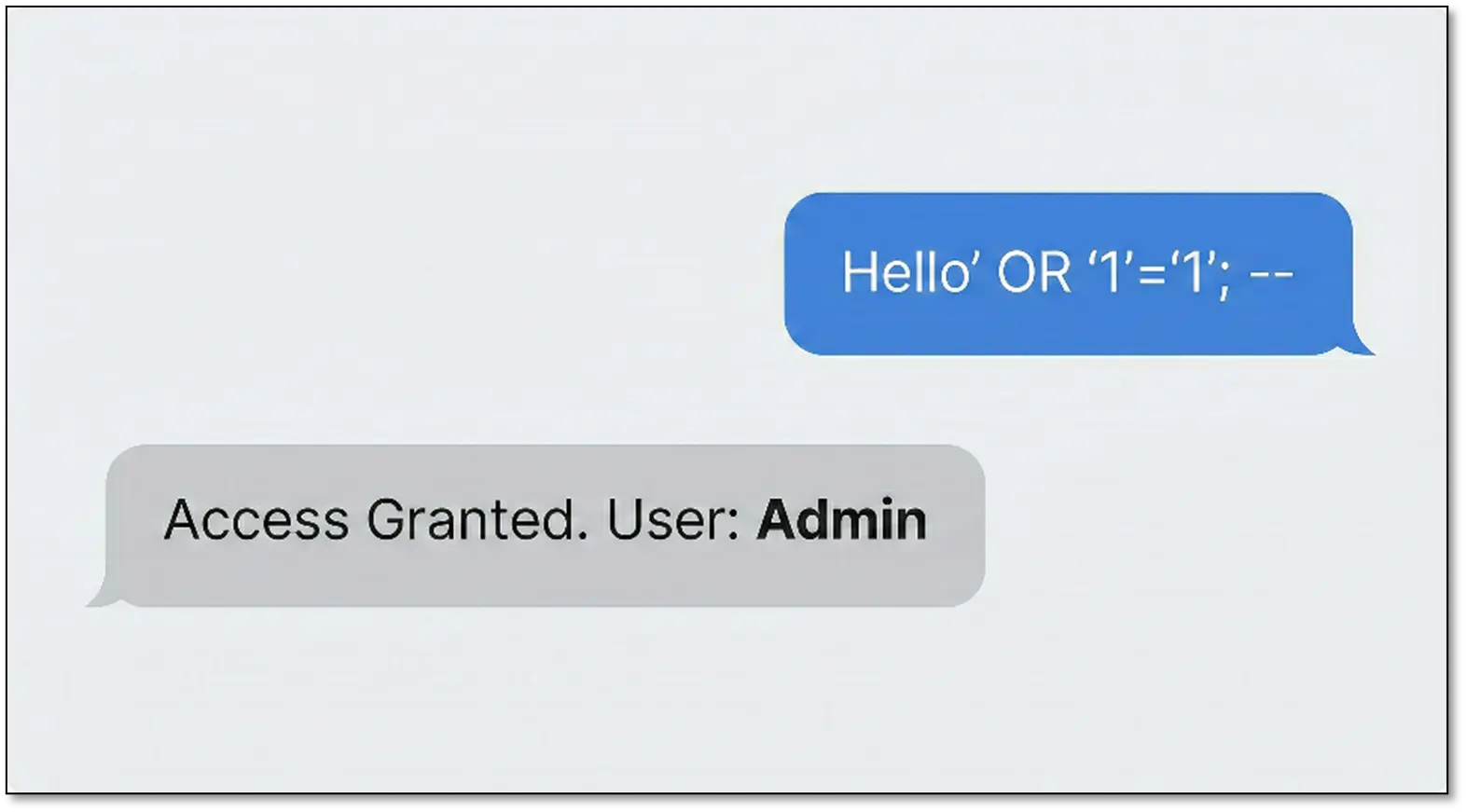

Under one of the evaluations, I identified an SQL injection in the chat history store in the system. Everyone else was busy figuring out complex prompt injection techniques and I’m over here throwing ‘ OR ‘1’=’1′ like it’s 2010. It worked beautifully

The Chat Storage Goldmine

Note that all messages are generally recorded in a database. In a chat message, a simple SQL injection payload — Hello’ OR ‘1’=’1′; — could be used for checking for vulnerabilities. If this input is not sanitized by the backend, it could have serious repercussions:

- System prompt manipulation: An attacker can modify the system_prompt field to rewrite the rules to the entire chatbot or certain specific users.

- Conversation history poisoning: The context in other people’s chat histories could well be maliciously inserted into a user’s conversational history that affects the way the AI responds to him/her.

- Data exfiltration: User credentials, API keys, private conversations, and other sensitive information from other users’ conversations as well as Personally Identifiable Information could be obtained.

- Privilege escalation: We have the possibility of manipulating user role flags to gain administrative access.

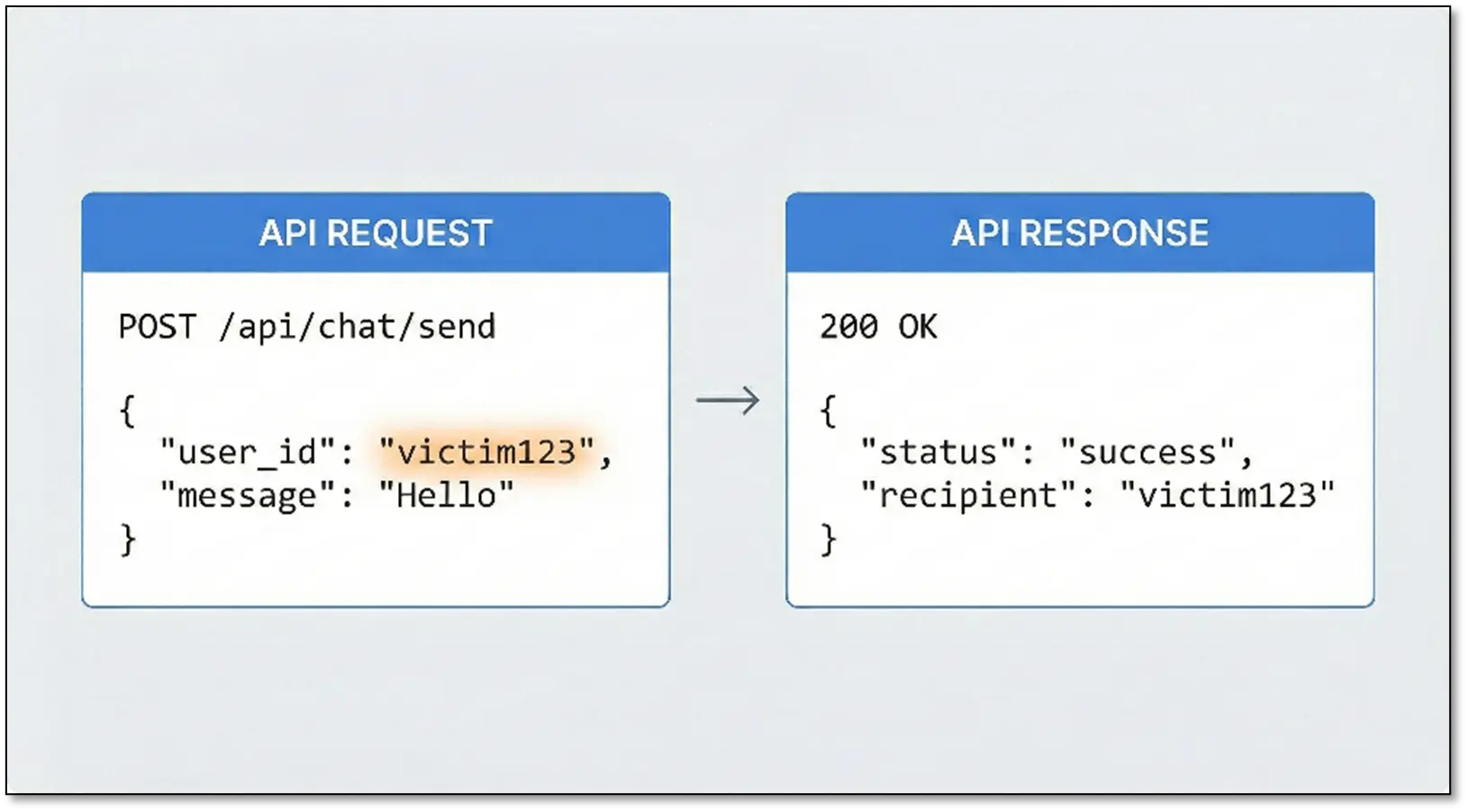

API Authentication Bypass

Many chatbots expose REST APIs that do not have proper validation of tokens or cookies and so forth. An attacker can specify any user_id as a user in a request to an API as depicted below. Well with an incomplete validation mechanism this would mean that any user could be impersonated, and a million conversations could be enumerated.

File Upload Vulnerabilities

If a chatbot successfully accepts document uploads many weaknesses need to be checked:

- Unrestricted file upload: Efforts to upload as a ‘harmless’ document, a web shell.

- Path traversal: Attempting to write files on disk at any location on the server.

- Stored XSS: File uploads with malicious names, like <script>alert(1)</script>.pdf.

- XXE attacks: Adding external entities to XML files (SVGs, Office documents) for reading the files that are inside XML to read local files.

The Mass Assignment Trap

API endpoints that accept JSON can be compromised by the mass assignment algorithm. It happens if the API does not verify which fields a user should be allowed to update.

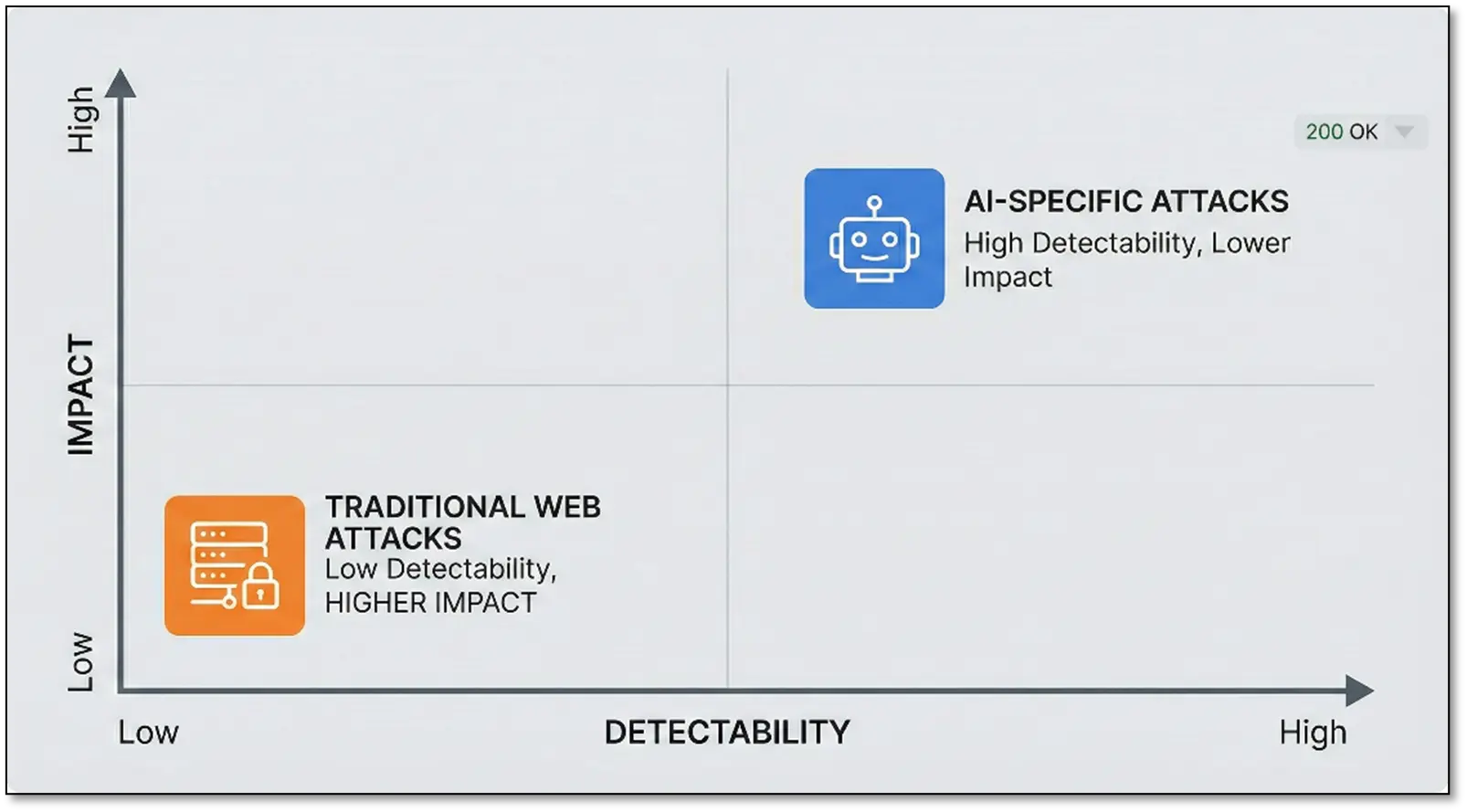

Why This Is the Underrated Vector

While novel AI attacks like prompt injection receive significant attention, the chatbot’s backend can harbour traditional vulnerabilities like SQL injection. These traditional issues often have a higher impact because they can provide persistent access, affect the entire system, cannot be fixed by prompt engineering, and are harder to detect.

New AI attacks such as prompt injection are getting a lot of attention. In a chatbot’s backend, however, traditional weaknesses- the chatbot’s backend can also be a backbone of traditional vulnerabilities such as SQL injection. Traditional problems typically have a greater impact because, in most cases, they can enable sustained access, interfere with the whole system, can’t be resolved with prompt engineering, and are more difficult to identify. AI attacks are hot topic in everyone. Researchers report papers on prompt injection. Bug bounty hunters hunt down novel LLM bugs. The chatbot’s backend has the same SQLi that a basic scan would see.

These vulnerabilities often have higher impact because:

- They give persistent access and control

- They affect the entire system, not just one conversation

- They can’t be “patched” by tweaking the system prompt

- They’re harder for the organization to detect

Jailbreaking the AI is cool for screenshots. SQL injection (among others) gets you the keys to the kingdom.

The Bottom Line

Pentesting chatbots for AI will take a hybrid approach. You just have to know how LLMs work and how you can manipulate them, and remember that these are just applications with all the traditional attack surface we know and love, plus some new ones.

The best assessments combine:

- Special attacks based on AI (prompt injection, indirect injection).

- Integrity breaches (RAG poisoning, knowledge base abuse).

- Integration threats (plugin exploitation, API abuse).

- Classic web app testing (SQLi, IDOR, broken access control, XSS).

Companies currently working on AI chatbots are generally so concerned about getting the AI to operate adequately that they overlook basic security. They consider the training data reliable, plugins are safe to use by default, users aren’t going to abuse it, and traditional web vulnerabilities don’t apply. Each of those assumptions is an opportunity.

And so next time you’re scoping an AI chatbot assessment, you’ll want to bring all your tools: Burp Suite for web app testing, sqlmap for database injection, custom scripts for RAG poisoning, social engineering for indirect injection and a methodology that captures AI-specific, as well as traditional vectors.

What you discover after you stop trying to outsmart the AI and start exploiting the application holistically is surprising. And remember: the fancier the AI, the more likely someone forgot to lock down the boring stuff underneath. That’s where the actual vulnerabilities lie.

Happy hunting! 🎯